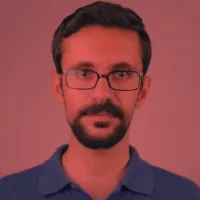

Thanks to the rise of artificial intelligence, robots have made great advances in voice communication in recent months. Now work is focusing on facial expressions, with the aim of one day making these robots truly more social.

Although still in development, Emo can now make eye contact and use two AI models to detect a person's smile before they make it so that it can smile back.

The first model predicts human facial expressions by analyzing subtle facial changes, while the second generates motor commands to change the robot's attitude accordingly. The idea is to predict human reactions so that the robot always has the right expression, thus gaining people's trust.

The challenge lies not in generating these facial expressions, but in their sequencing or timing. According to the researchers working on this project, Emo can predict an approaching smile about 840 milliseconds before a person smiles. This then allows it to smile at the same time as the person.

Currently, Emo's head is equipped with 26 actuators that allow for a wide range of subtle facial expressions. To enable these interactions, the researchers have also integrated high-resolution cameras into the pupil of each of the robot's eyes. This allows hours of training by watching videos of human facial expressions.

The potential of Emo and its future incarnations is far-reaching, as this kind of robot, which ultimately possesses a form of 'empathy', could be useful in a wide range of fields such as communication, education and therapy.